OrionX

OrionX software provides a fine-grained, remote, and dynamically configurable virtualization solution for physical GPUs with almost no performance loss.

GEMINI AI Platform

The GEMINI AI Platform provides customers with powerful AI computing power management services and efficient algorithm development and training support.

VirtAI Cloud

Based on leading GPU virtualization technology, VirtAI Cloud connects global computing power to bring you a high-quality AI application development experience and greatly reduce your costs.

Company Profile

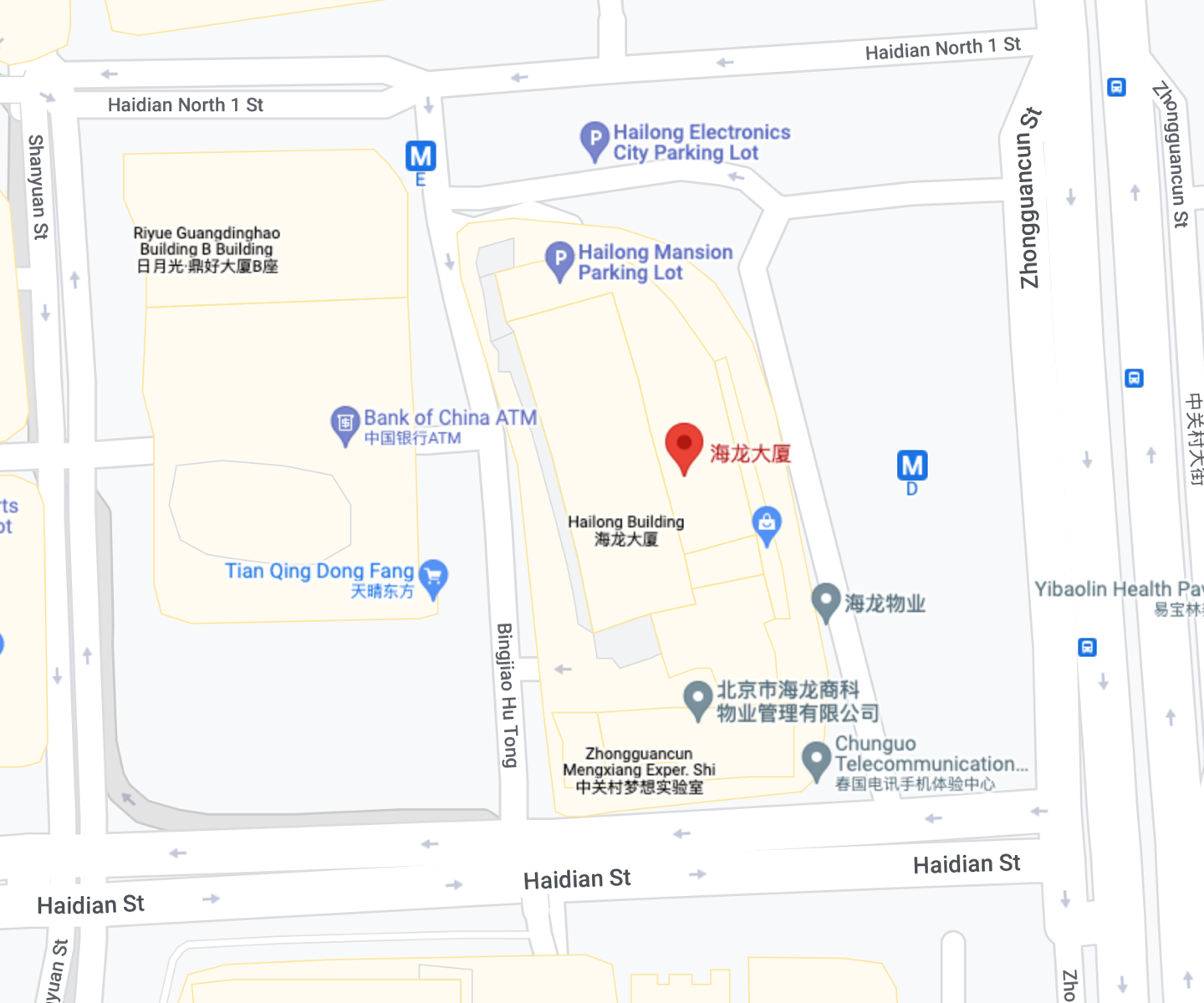

Founded in 2019 in Zhongguancun High-tech Park in Beijing, Beijing VirtAI Technology Co., Ltd. has a professional research and development, operation and service team, and is rated as TOP20, the most growing startup company of WISE2020 "New Infrastructure Entrepreneurship List". VirtAI focuses on building data center level AI computing power resource accelerator and AI development platform for enterprise users.

VirtAI OrionX AI computing power resource acceleratoring software can help users improve resource utilization and reduce TCO and improve the work efficiency of algorithm engineers.The GEMINI AI training platform of VirtAI provides customers with powerful AI computing power management services and efficient algorithm development and training support, which can simplify enterprises to build an AI platform, manage GPU and make good use of AI services.

Dr. Wang Kun, founder and CEO of VirtAI, said that with its standardized and replicable product architecture, it has been recognized by a large number of top customers in industries, including the Internet, finance, telecom operators, scientific research institutions and universities. Capital market for the development of technology —— technology was established more than two years has completed tens of millions of dollars of financing, top investment institutions have supported the development of technology, including PRO Capital, Prosperity7 Ventures,ORIZA Holdings,CMB International,SHUNWEI Capital,Hill house Group,Vision Knight Capital,GOBI CHINA,IFLYTEK,YONGHUA Capital is witnessing the pace of science and technology to forge ahead.

Driving AI Future

Driving AI Future

OrionX GPU Pooling Solution to help customers build data center level AI computing resources accelerator, make the user application without modification can transparent share and use the data center on any server above AI computing power, not only can help users improve resource utilization, and can greatly facilitate the deployment of user application.

By sharing the GPU, maximize your resource utilization and save up to 80% of your hardware costs.

Improve the utilization of the whole cloud and data center GPU, save the algorithmic engineer resources,and optimize the multi-machine and multi-card deployment model.

The OrionX vGPU resources are allocated with the AI application and CUDA application startup, and automatically as the application exits.The vGPU resource is dynamically released without restart the VM / container.

Applications can use OrionX vGPU on remote physical nodes, application deployment is not constrained by GPU server location, number of resources, and support fine-grained GPU virtualization.

Compatible with existing AI applications and CUDA applications, without any modifications due to the use of OrionX, support multi-vendor / multi-brand AI computing power, and reduce the management complexity and cost of the GPU.

Contact us

Address

12/F, Building C, Shanghai Film Plaza, No. 595, Caoxi North Road, Xuhui District, Shanghai

Email

Number

010-62560919